Molly Vs The Machines: How an algorithm turned a locked house into a lethal room

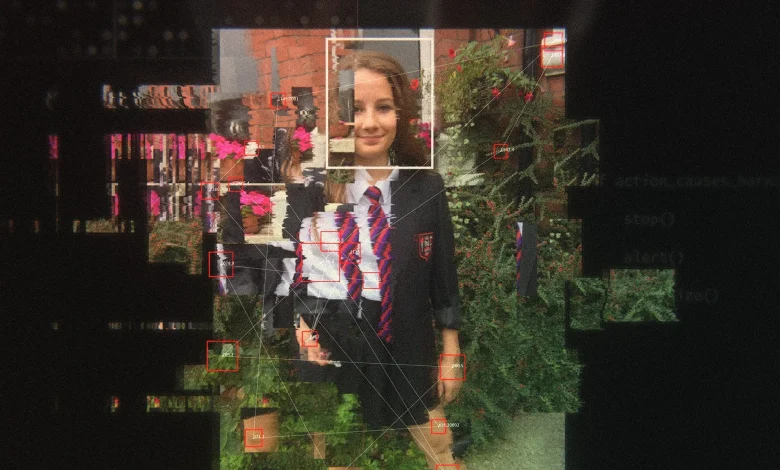

The documentary molly vs the machines reframes a domestic tragedy as a technological failure: a 14-year-old girl who took her own life after months of being fed self-harm and suicide material by automated feeds. The film and public records now raise a central challenge — what responsibilities do the designers of those feeds bear when their systems routinize harm into a child’s private space?

What is not being told?

Verified facts:

– Molly Russell was 14 when she died by suicide after stepping into her bedroom and closing the door. The bedroom had been seen by her family as a safe space.

– In the six months before her death she was exposed to thousands of troubling items: one record cites 2, 100 images and videos delivered to her, while coronial evidence noted she interacted with more than 2, 000 harmful posts on Instagram in that final period.

– A coroner concluded that social media contributed “more than minimally” to her death.

– Friends Charlotte Campbell and Sophie Conlan describe Molly as sociable, musical and supportive; they say they were unaware of the intensity of what she was viewing privately.

– Ian Russell, Molly’s father, says that locking a front door could not protect his daughter from the stream of harmful material arriving on a smartphone in her bedroom, and he believes these harms were preventable.

Informed analysis:

The materials presented in the film and at the inquest draw a line from anonymous algorithmic recommendation to the personal despair experienced by a child. The verified counts of items and the coroner’s wording together indicate sustained exposure rather than an isolated encounter. Friends’ memories of Molly’s public, outgoing character contrast sharply with the hidden content she viewed, highlighting a rupture between offline identity and online feed. That rupture, as laid out by family testimony, reframes parental safety measures—locked doors, supervision—as incomplete if the device remains an unmediated conduit for algorithmic streams.

What does Molly Vs The Machines reveal?

The documentary molly vs the machines presents a sequence of claims and reconstructions that compel three linked observations grounded in the record: first, automated recommendation systems delivered very large volumes of self-harm and suicide material to a vulnerable teenager; second, coronial findings attribute contributory weight to that online harm; third, close friends and family were unaware of the scale or character of the content that reached her. Together, these facts expose a collision between engineering choices and intimate human vulnerability.

The film stages that collision in personal terms: recreations of Molly’s room, testimony from her best friends Charlotte Campbell and Sophie Conlan about who Molly was at school, and on-camera statements from Ian Russell about his belief that technology companies played a causal role in his daughter’s death. The inquest record that a witness was disturbed by viewing the same material underscores that this was not merely hearsay but consists of tangible, disturbing items that affected adults as well as the child.

What must happen next?

Verified facts point to gaps: large volumes of harmful material reached a minor, the coroner attached significance to online content, and family members are publicly seeking change. The immediate accountability questions are procedural and technical — how are algorithmic recommendations audited for risk to minors, what thresholds trigger human review, and how are flagged harms surfaced to families and investigators? These are operational queries grounded in the surface facts the documentary brings together.

Informed analysis suggests a basic public requirement: transparent processes for assessing the downstream effects of engagement-driven recommendation systems on vulnerable users. If a coroner can state that online material contributed “more than minimally” to a death, policymakers and platform designers face an evidentiary imperative to show how their systems detect, limit and remediate sustained exposure to self-harm content for minors.

Verified fact: molly vs the machines documents a case where a locked front door could not stop algorithmic feeds from entering a young person’s private room. Informed analysis: that fact reframes safety as a technological as well as a domestic responsibility and points to an urgent need for structural transparency and reform.